Umain Works

No One Scales AI Alone

The Scale Illusion

The first AI project in most organizations is a technical exercise. A proof of concept. A model that works in a sandbox. And that success produces a dangerous illusion — that scaling AI is simply "more of the same."

It is not.

Scaling AI has almost nothing to do with scaling technology. It requires changing how an organization decides, prioritizes, and operates. It is a problem of management, governance, and culture — structural, not technological, as Paulo recently argued. Scaling amplifies that gap. Most organizations are not prepared for it because they invested everything in the technical piece and almost nothing in what surrounds it.

Roughly 70% of what determines whether an AI project survives or dies is non-technological. Executive sponsorship. Change management. Whether anyone has actually thought about how the model fits into the day-to-day work of real teams. I have seen excellent models that never left the laboratory because none of that existed around them.

How to Spot a Fragile Scale

Fragility has visible signals. I look for five.

- First, all the AI knowledge in the organization is concentrated in one person or a small team. If they leave, the project dies with them.

- Second, the company depends on a single technology vendor without truly understanding what it bought.

- Third, there is no AI roadmap connected to the business. What exists is a list of technical experiments with no link to real KPIs.

- Fourth, leadership cannot explain the purpose of its AI initiatives without relying on jargon.

And the most dangerous signal: the organization confuses "having an AI project" with "scaling AI." Having a chatbot is not having a strategy.

When Partnerships Earn Their Keep

Partnerships become critical in three concrete moments.

- The first is the jump from pilot to production. That is where the organization suddenly needs capacity, reliability, and experience it rarely has in-house.

- The second is when the company needs capabilities that make no sense to build internally — governance, security, infrastructure, compliance. It makes no sense for every business to reinvent these layers.

- The third is when credibility matters. Before a board approving a large investment, or a regulator auditing a deployment, a partner ecosystem of reference validates the decision. It gives the people who approve budgets and the people who audit outcomes a reason to trust the plan.

No technology company grows alone. You grow with partners, or you don't grow.

That lesson has only become more relevant with AI.

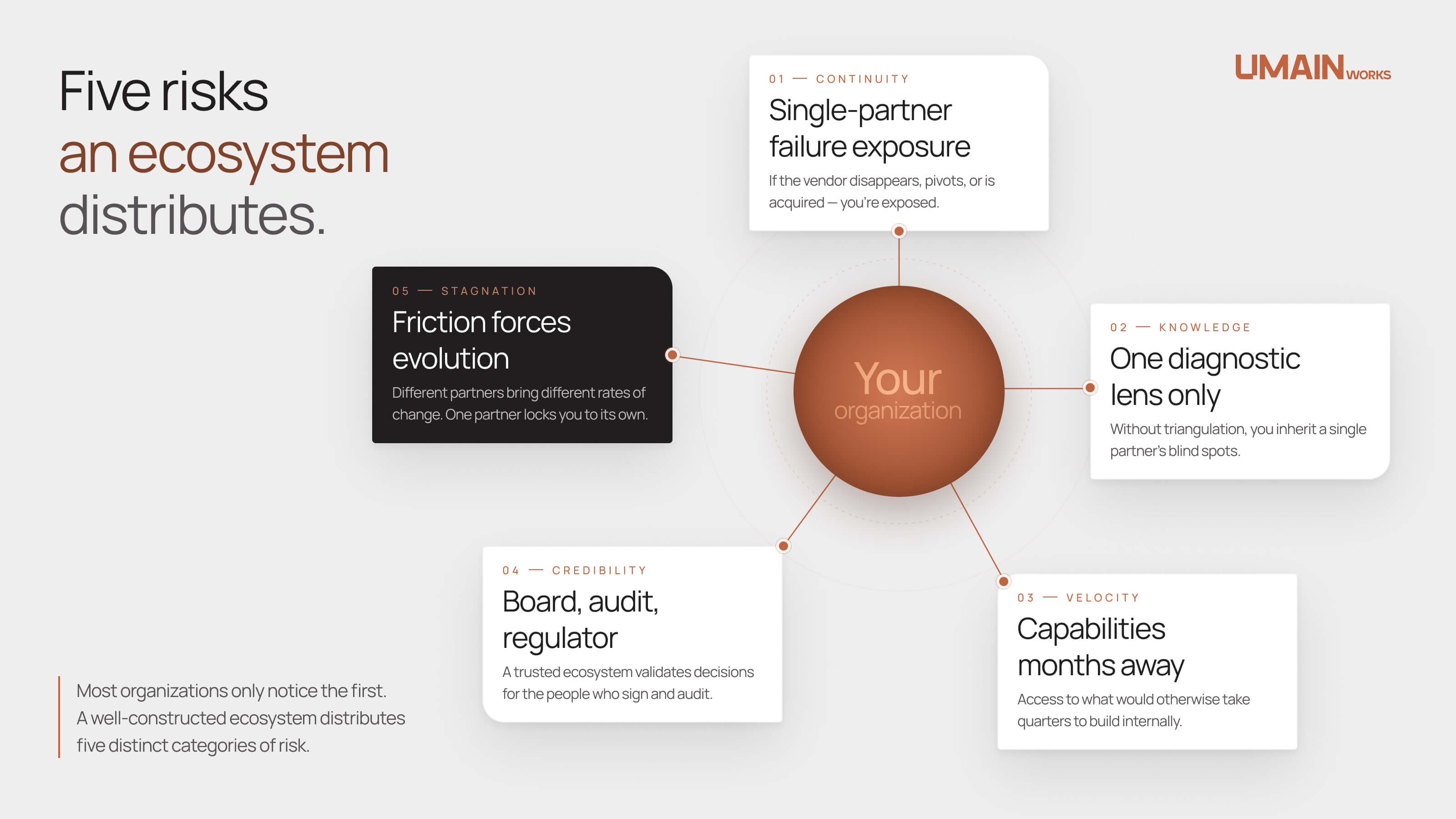

Five Risks an Ecosystem Distributes

The risk that matters most is rarely the risk that feels most urgent. A well-constructed ecosystem distributes five distinct categories of risk — and most organizations only notice the first.

- Continuity risk. If your single partner disappears, changes strategy, or changes ownership, you are exposed. An ecosystem spreads that risk.

- Knowledge risk. You stop depending on one view of the problem. Different partners bring different diagnostic lenses, and you get to triangulate.

- Velocity risk. You gain access to capabilities that would otherwise take months to build.

- Credibility risk. A solid ecosystem validates your decisions in front of internal and external stakeholders. It is easier to approve a budget, pass an audit, or prepare a regulatory submission when the underlying stack is already trusted by the market.

- Stagnation risk. This one is the most underestimated. Different partners bring different perspectives, and that friction forces the organization to evolve. A single partner, by contrast, tends to lock you into its own rate of change.

Partnerships That Aren't Partnerships

Not every agreement that calls itself a partnership is one.

The most common bad pattern is the commercial-convenience partnership disguised as a strategic alliance. Two companies sign an agreement, publish a press release, and then there is no substance — no joint delivery, no mutual investment, no real sharing of risk. The client sits in the middle and figures out fast that there is no depth behind the logo.

The second bad pattern is the partnership where one side has no skin in the game. If your partner risks nothing alongside you, it is not a partner — it is a reselling channel. I have seen situations where the integrator promises the world, the technology vendor delivers the software, and no one is accountable for the outcome. The client pays twice: first for the project, then to repair what was not done right.

This is how the market learned to confuse partnerships with lock-in. Historically, many vendors used the word "partnership" as a euphemism for dependence. And there is fault on both sides — vendors that design intentional dependency, and clients that do not read the terms, do not demand portability, and choose by lowest price instead of by the most open architecture.

Lock-in is not in the technology. It is in the design of the solution.

Three Principles We Practice at Umain

Because the difference between a partnership that opens options and a contract that closes them is architectural, we practice three principles.

- Architectural transparency. The client must understand what was built, where the data lives, and what happens if they change partners. If they do not understand, they are trapped — even when they think they are not.

- Clear intellectual property. Everything created for a client belongs to the client. Models, pipelines, documentation. The client can walk away tomorrow and take all of it.

- Open standards over proprietary dependence. Whenever possible, we use open standards and architectures that preserve portability. Our goal is for the client to stay with us because they want to — not because they have to.

When we work with a reference partner like IBM — as in our watsonx Orchestrate implementation practice — we are not just accessing technology. We are accessing decades of investment in research, security, compliance, and enterprise support. For a CEO approving a significant AI investment, knowing that the stack is built on technology already tested by thousands of organizations dramatically reduces perceived risk.

That is not marketing. It is practical risk arithmetic. The reference partner gives you the foundation; the consultancy that walks with you gives you the adaptation to your context. Together they create something that neither creates alone.

Sustainable Scale, Not Rapid Growth

The organizations that scale AI well make one decision the others do not: they invest in foundations before use cases.

They start with governance, data quality, team training, and executive alignment. It feels slow. It feels unsexy. But by the third or fourth project, they have something to stand on. The organizations that grow fast jump straight to the most visible use cases, accumulate technical and organizational debt, and by the fifth project they have a house of cards.

The pattern I see most often is what I call the AI sprint followed by the AI hangover. The organization, usually under pressure from its board or the market, launches five or six projects in parallel. It does not have the people to sustain them, the governance to govern them, or the processes to measure what is actually working. Teams burn out. Sponsors lose patience. Twelve months later, budgets are cut, teams are dispersed, and a quiet internal narrative takes hold: "AI doesn't work for us."

The problem was never AI. The problem was ambition misaligned with real execution capacity — what Ricardo has described as careless adoption, driven by pressure to replicate what competitors appear to be doing. And once the narrative "AI doesn't work for us" settles in, reversing it takes far more effort than scaling properly from the start ever would have. The speed created the problem; the slowness needed to correct it now feels even more unacceptable. The only way out is discipline — and someone with the courage to say "let's do less, but let's do it well."

Sustainable scale asks for trade-offs most leadership teams find difficult.

Accept that the first three to six months may produce no visible results — and communicate that internally from day one. Accept that you will have to say no to seductive use cases that do not align with the strategy. Accept that part of the investment goes into invisible things — governance, data quality, training — that generate no headlines but generate sustainability. And accept, perhaps hardest of all, that you will move slower than the competition in the short term, with the conviction that you will go further in the medium term.

That requires leadership maturity. And it is precisely the kind of confidence a solid ecosystem helps to build.

The Deeper Argument for Ecosystem

There is one argument for ecosystem that rarely gets discussed, and it is the strongest one.

AI is evolving at a pace no single organization can match alone. The models of yesterday become obsolete. Frameworks shift. Regulation evolves. Having partners who live in this world full-time — who invest billions in R&D every year — is the most intelligent way to stay relevant without constantly rebuilding.

This is not about delegating responsibility. It is about sharing the burden of continuous evolution.

And that, in my experience, is the deeper case for working in ecosystem: it is not only about today's project. It is about the capacity to keep evolving tomorrow.

No one scales AI alone. The companies that try, learn it the hard way.

Start where it matters.

You’re under pressure to act, but clarity comes before tools.

That’s why we usually start with AI Value Discovery, a structured process to identify where AI creates measurable impact, before any solution is implemented.